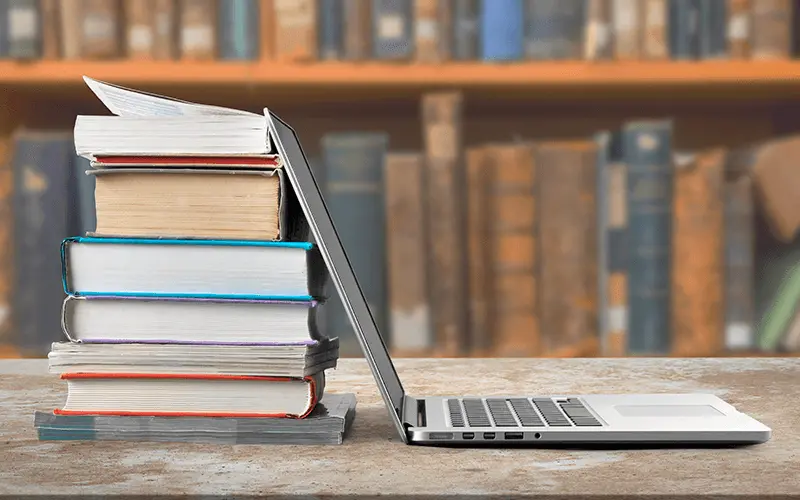

In an age of glowing screens and instant digital downloads, the printed book feels almost like an artifact. Yet, it endures—a testament to its tactile pleasure, the scent of its pages, and the timeless ritual of turning them. While digital formats offer convenience, the printed book remains a powerful cultural symbol. For the bookseller, however, this coexistence has created a new set of challenges, transforming their role from mere merchants of books to custodians of a culture, fighting for survival in an increasingly digital world.

The Rise of the Digital Realm: A New Challenger

The past two decades have seen a seismic shift in the publishing landscape, driven by two primary forces:

E-books and Digital Readers:

The advent of devices like the Kindle and Kobo made it possible to carry thousands of titles in one’s pocket. For readers who prioritize convenience, storage, and cost, e-books offer a compelling alternative.

Online Retail Giants:

The sheer scale and speed of online behemoths like Amazon have disrupted traditional retail. They offer a vast catalogue, often at discounted prices, delivered straight to the customer’s doorstep, bypassing the physical bookstore entirely.

This dual assault has left the traditional bookseller on the frontlines of a battle for relevance.

The Bookseller’s Battle: Challenges on Every Shelf

The modern bookseller faces a formidable array of challenges that go far beyond just selling books:

Shrinking Margins: The deep discounts offered by online retailers force physical bookstores to compete on price, a losing battle. This erodes their already slim profit margins, making it difficult to cover overheads like rent and staff salaries.

The “Showrooming” Problem: Customers frequently visit bookstores to browse, discover new titles, and get recommendations from staff, only to then purchase the book online for a lower price. The physical store becomes a free showroom for online competitors.

Inventory Management: Deciding what titles to stock is a delicate art. A small bookstore can’t possibly compete with the endless digital catalogue. They must curate a collection that is both commercially viable and reflective of their community’s interests—a constant guessing game.

High Overheads: Brick-and-mortar stores come with significant costs: rent, electricity, maintenance, and staff wages. These fixed costs are a heavy burden, especially with fluctuating foot traffic.

The “Experience” Paradox: While physical bookstores are valued for the browsing experience, community feel, and expert recommendations, translating this intangible value into sales is a constant struggle. The very thing that makes them unique isn’t enough to guarantee profitability.

The Fight for Survival: From Bookstore to Cultural Hub

The booksellers who are thriving today have realized that they can’t simply be a place to buy books. They must become something more—a vital cultural hub in their community. Their strategy is a masterclass in adapting to the digital age:

Curated Collections: Rather than trying to stock everything, they specialize. They might focus on local authors, a specific genre (like sci-fi or history), or independent publishers, creating a unique identity that online retailers can’t replicate.

Community Events: Bookstores are becoming venues for author readings, book clubs, poetry slams, children’s story hours, and workshops. These events foster a sense of community and provide a reason for people to step away from their screens and gather.

Expert Recommendations: The biggest advantage a physical bookstore has is its knowledgeable staff. They offer personalized recommendations and a human touch that no algorithm can match.

Partnerships: Collaborating with local cafes, schools, and literary festivals to host events and cross-promote. A bookstore can become a linchpin of the local cultural ecosystem.

Diversification: Many bookstores now sell coffee, stationery, unique gifts, and other merchandise to supplement their income and create a more compelling retail experience.

Conclusion

The printed book is not dead, but it has changed. It is no longer just a vessel for information; it is an object of value, an aesthetic choice, and a symbol of a more mindful way of consuming knowledge. The bookseller is its guardian, a passionate advocate in a world of instant gratification. Their fight for survival is more than a commercial battle; it is a cultural one. By transforming their stores into vibrant community spaces, they are proving that in the digital age, a place where people can gather, browse, and connect over a shared love of reading is more essential than ever. The last page has yet to be turned.